When we talk about the mobile Web being a good candidate to be a unifying platform for mobile and beyond, we often get nay-sayers telling us that there is no chance of this happening.

Their claims often chanted include:

- Cross platform never works (case in point: Swing)

- You can’t create a great experience without going native (and Apple raised the bar on the experience!)

There is some validity to some of this, but I also wanted to discuss the other side too.

Cross Platform Did Work

Swing gets bashed because a) it didn’t take off, and b) people always saw Swing apps as ugly and great examples of the uncanny valley. That team worked tirelessly for many years to try to get the look and feels to be as exacting to their hosts as possible. A pixel off here and there…. and it felt wrong.

It turns out that this probably wasn’t the right approach, and either a) Use SWT to use the real OS components or b) create a great looking l&f that is different to any one host, but natural and fantastic to use. Ben, Jasper Potts, and others fought for such a look and feel in Nimbus but a lot of time had gone by.

We have all seen many platforms on top of hosts that don’t feel right and don’t look good. That doesn’t mean that cross platform can’t work. Flash is an example that is very much cross platform and that community very much went the “every app will have its own UI”…. probably TOO far in the other direction ;)

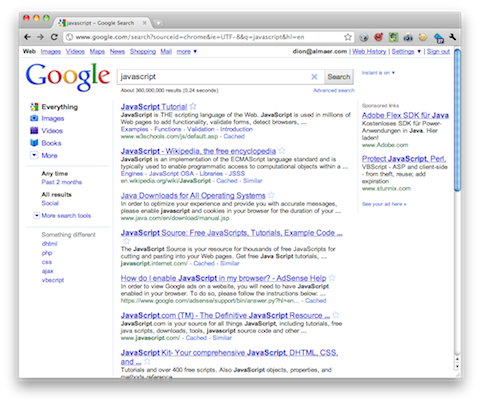

In fact, the Web itself is a fantastic cross platform success. We have argued that if it wasn’t for the massive Web revolution, would non-Microsoft vendors (read: Mac OS X) be in the situation there are now? Or would they have followed in the wake of Atari, Amiga and BeOS? When the Web happened, suddenly the interesting actions that people wanted to do on a computer were dominated by the global scale of the Web (Google, Amazon, Yahoo!, eBay, etc). The Microsoft Office lock-in was gone (aside: it also DID help a lot that Microsoft gave Apple money, got Office over there, and solutions like VMWare enabled those few Windows apps that you still wanted to come with you).

The Web was a great cross platform success, even though its rich capabilities in the areas of graphics were laughable…. as I mentioned in an earlier post:

When you look back at many of the earlier designs of the top website brands, they are comical by todays standards. However, at this same time, technology such as WPF was being touted on the desktop. Why would people visit Web sites when they could experience amazing native Windows and Macintosh experiences?

So, I don’t think you can discount the Web on the merits of “cross platform can’t win” as it already did win once, and at a time when the capability gap was much wider than we now have with HTML5.

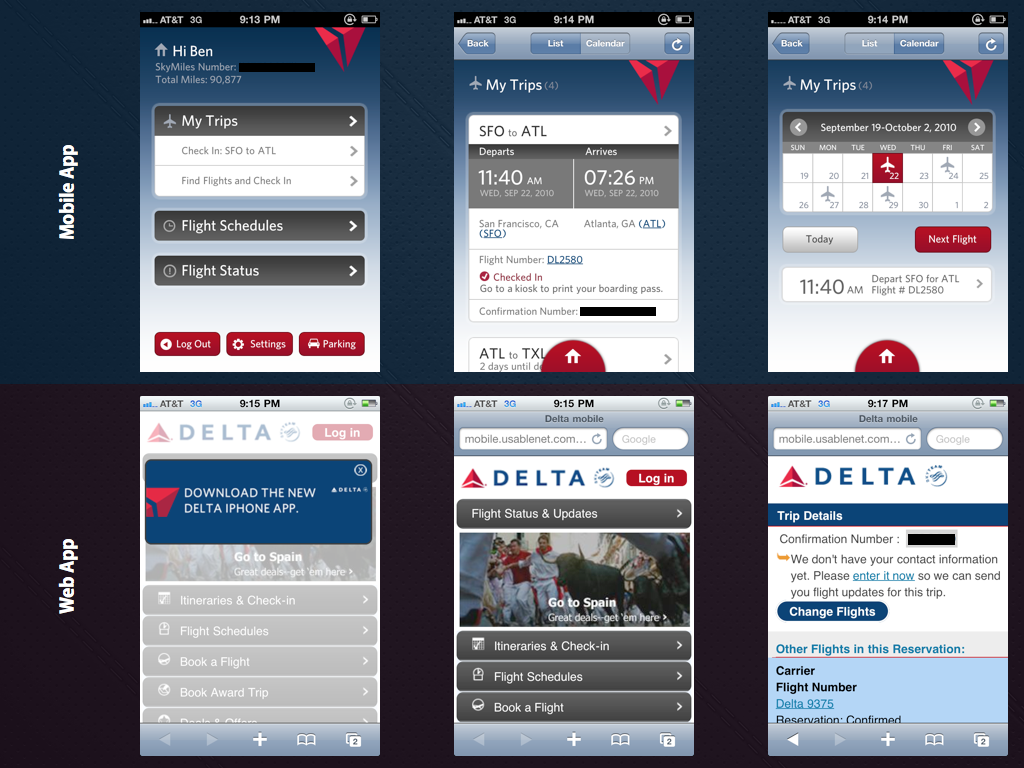

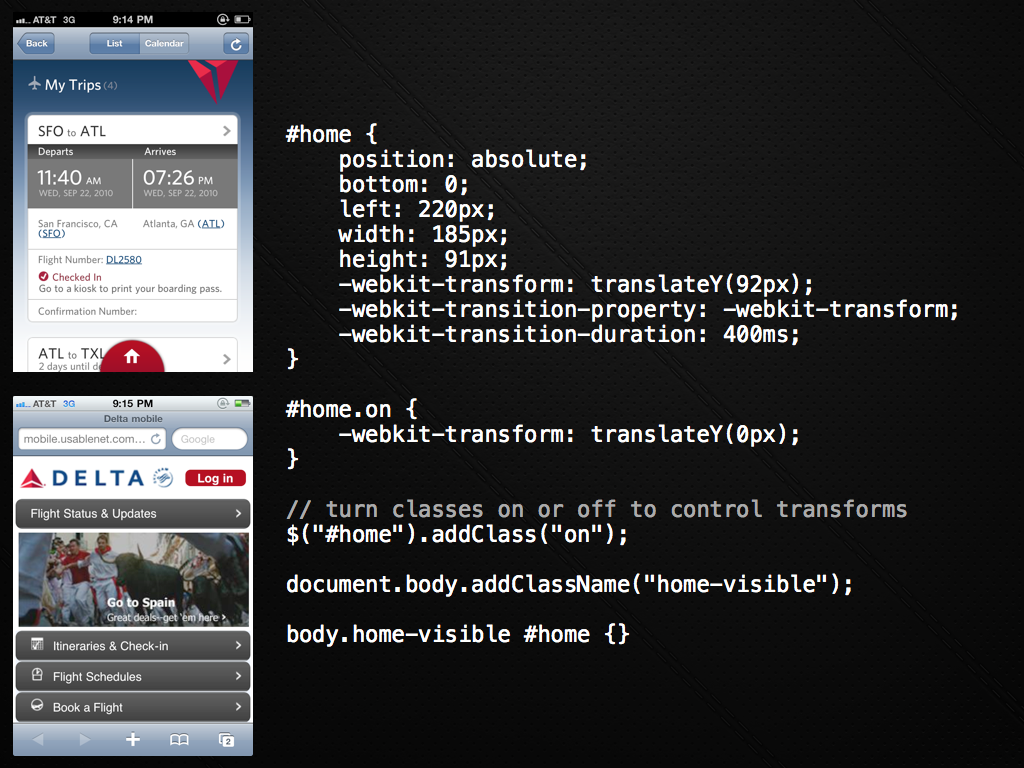

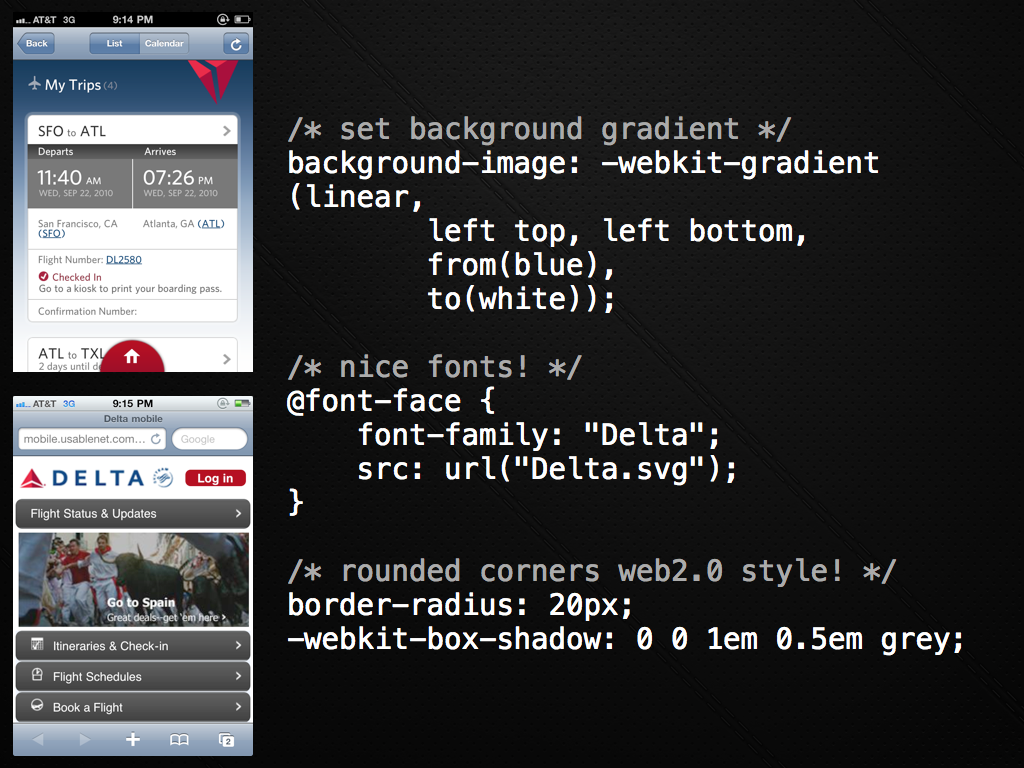

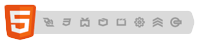

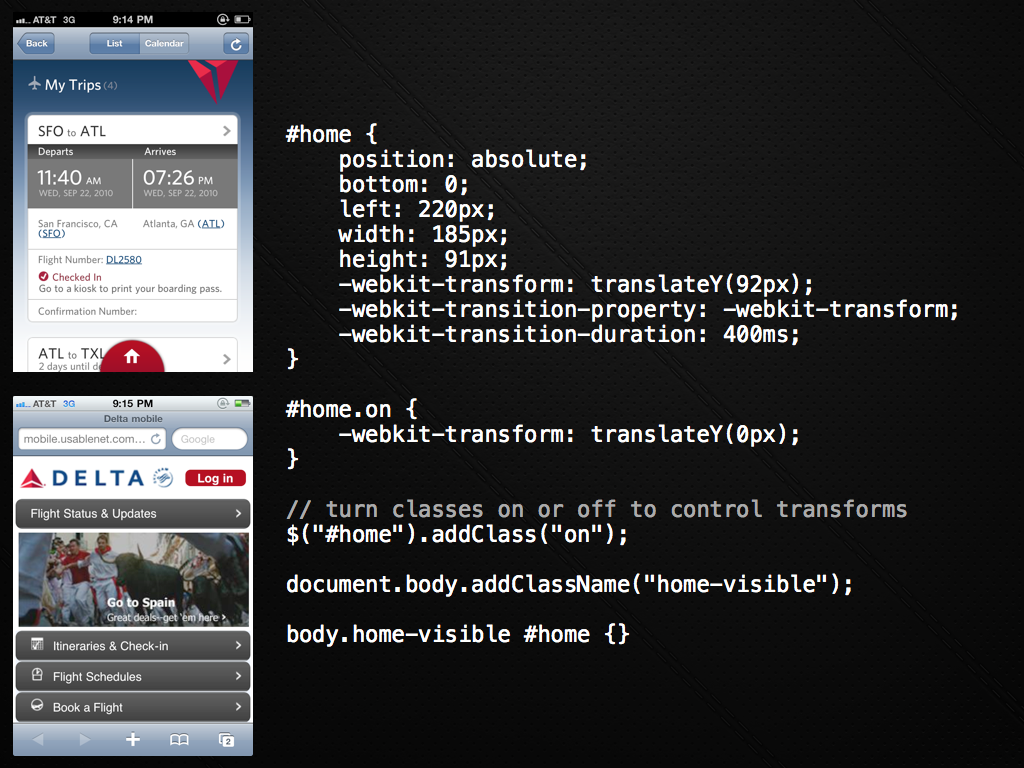

Compare this native app to their Web site!

I teased about the Delta mobile Web application before. If you compare their iPhone application to what you get if you go through a mobile browser, the difference is huge.

But, is the reason they are so different due to capability? I think not. I think the reason is much more about structure, legacy, politics, and history reasons.

I remember seeing an early viewing of an Adobe AIR eBay client. The thing was so rich, so much better than the awful eBay site in almost every way. It made you want to cry looking at the website afterwards. Why or why would eBay have this fantastic client and not spend time on the website where all their customers were! It wasn’t that they were baffoons, it was because they had no legacy in this new world!

The designers had free rein with a blank sheet of paper. They had core concepts and some design language, but total creative freedom. Compare that to the website. If they changed the color one hex value users would go nuts! Every time Facebook changes their site there is a massive campaign to change it back for the first 2 weeks.

They also didn’t have to run the gauntlet of the massive codebase that had been built over years to make any of these changes. And the QA. Ugh.

In fact, an entirely new team could be formed to do this work.

This is exactly what I am seeing in mobile. In many companies, if there is a mobile group, they are just that…. a very separate group. In a non-mobile-thinking company they are like the old “Mac” group in a Windows heavy shop that hangs in the corner and is very different.

Other companies are still getting into the world of mobile and realizing that usage patterns are going in that direction. They are bringing in consultants to help out. They are forming new crack teams to take on the challenge. They are realizing that they need to re-think the entire experience, and hopefully realizing that they need to create software as-a-whole in a very different manner.

The bar on the quality of experiences on some of the mobile platforms is very high indeed (and very low on others!) which has the (great) effect of pushing the bar forward.

Will this leave the Web versions behind? Maybe in the short term. Kinda like how the old Twitter website was simple compared to Tweetie and other clients, but #newtwitter is much richer and borrows some of the concepts where they make sense.

I am definitely seeing people swing back to their web experiences. As multiple platforms foster in touch and cross device, they are starting to feel the increasing tax of building totally different applications across the fragmentation, and then trying to keep them in sync once the 1.0 is complete. Outsourcing the 1.0 is one thing, but the syncing part is hard.

Back to Delta. You will notice that the screenshots on the web side get increasingly poor as you go deeper into the experience. Contrast that with the iPhone version above that is always at a high quality. It is time for them to go back to the web side and sync up.

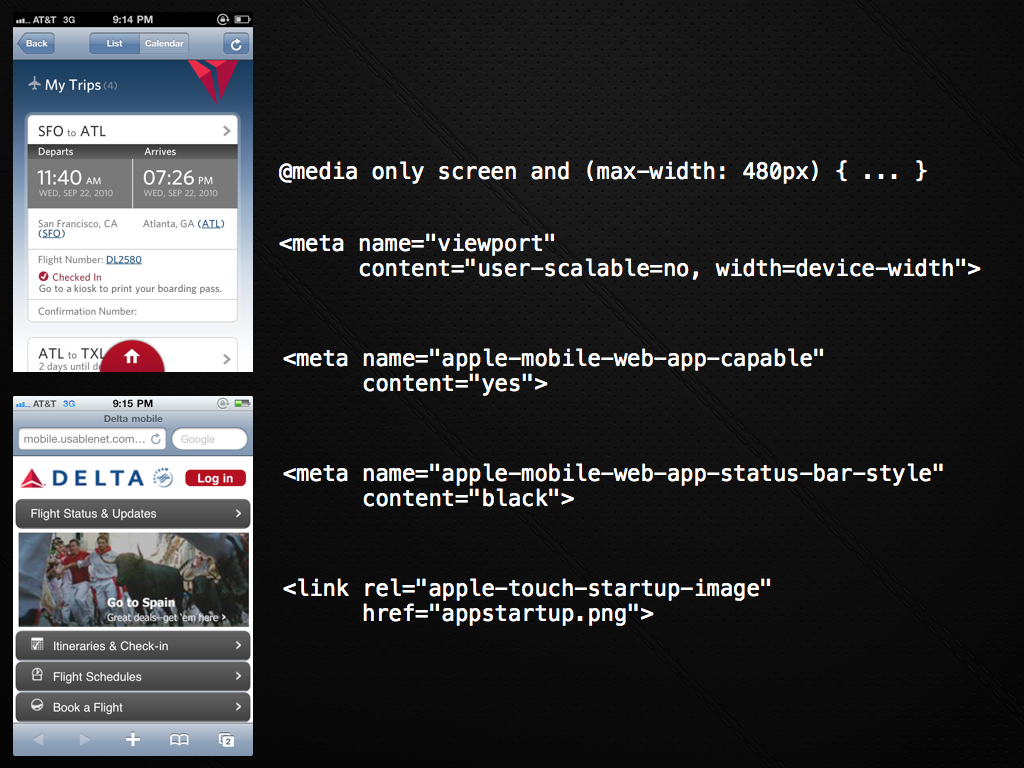

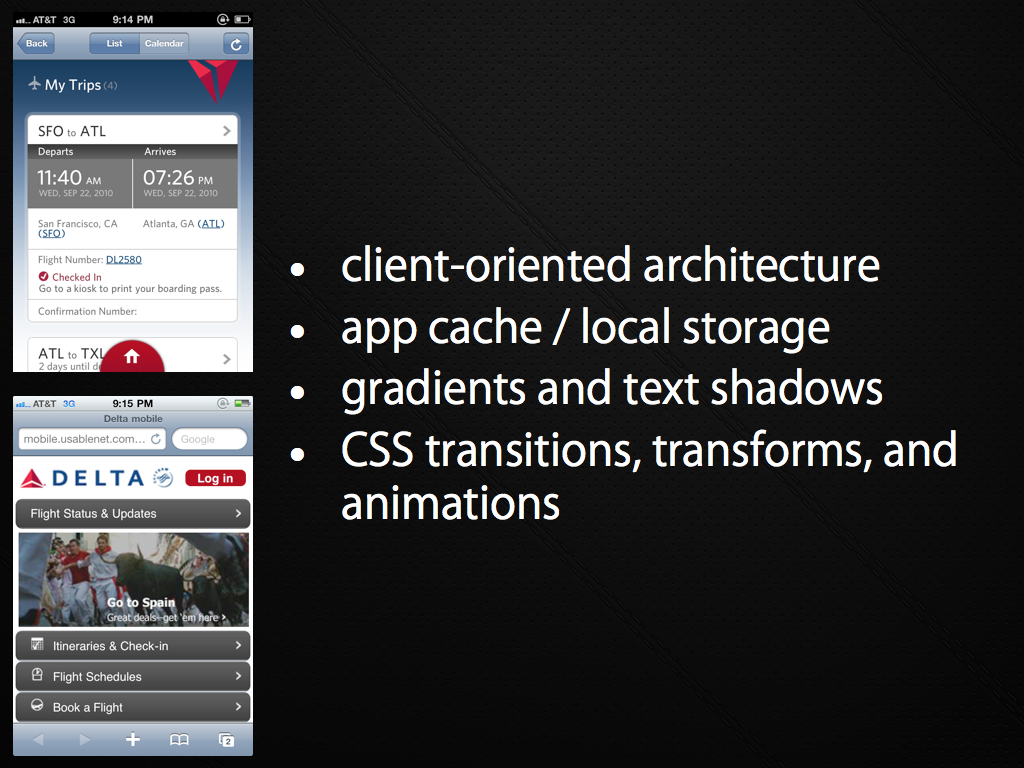

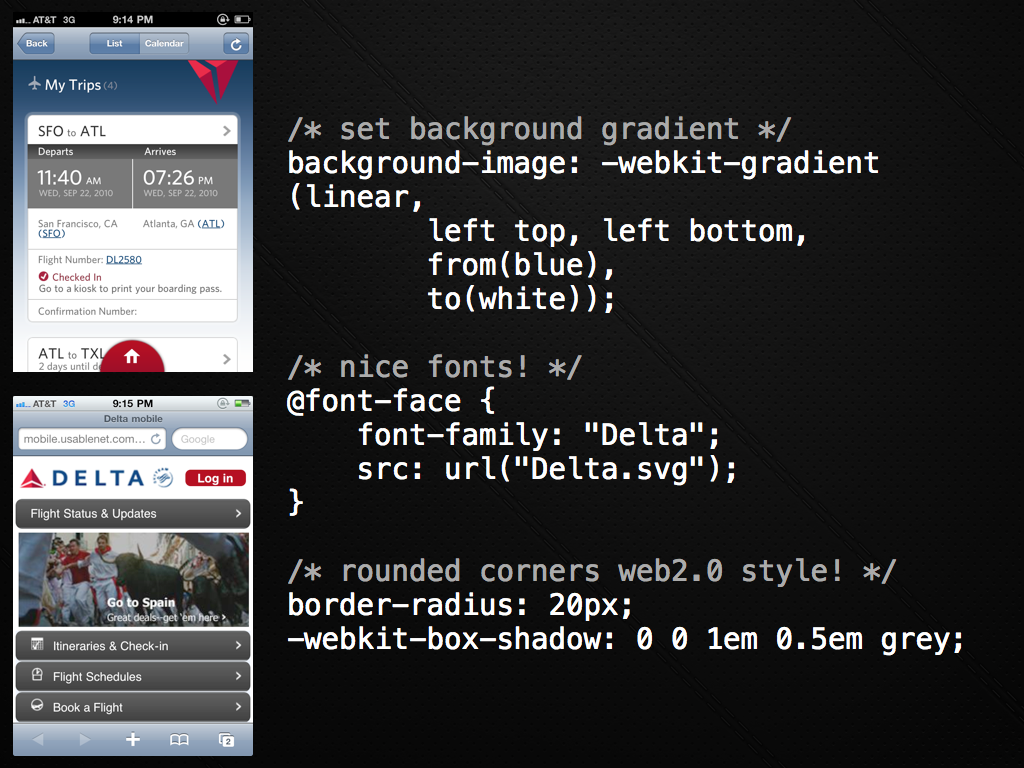

In fact, just before a recent talk, we coded up some of the simple transitions and feel just so show how easy it is to get some of this stuff working via the mobile Web. You can see the simple example here.

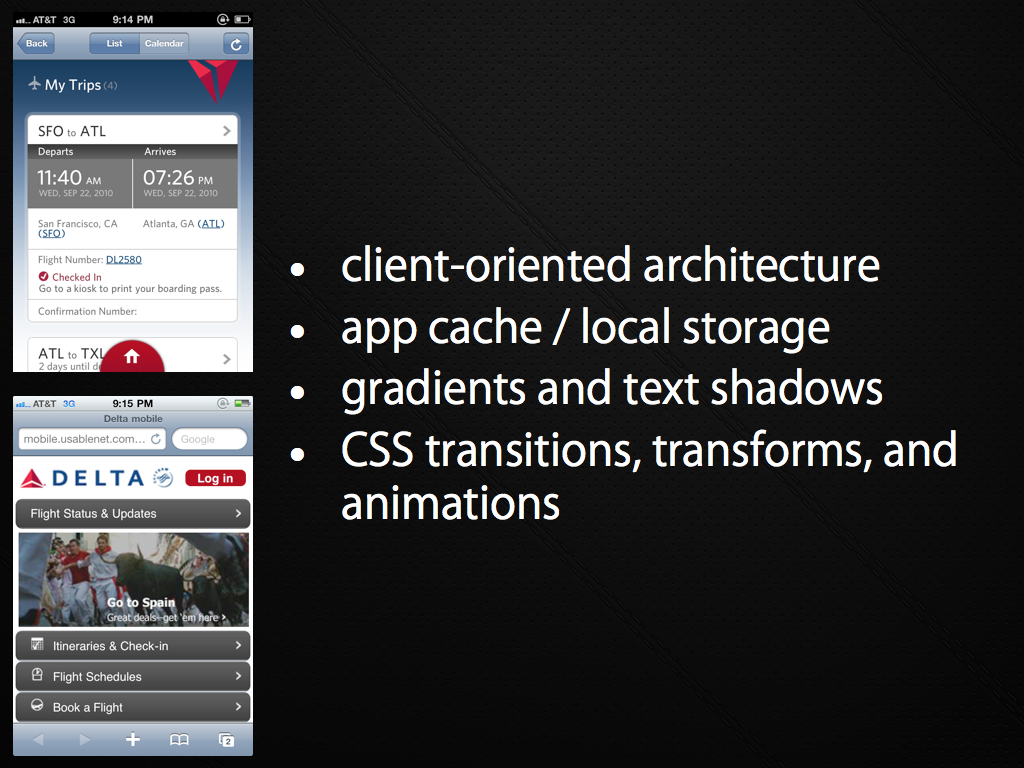

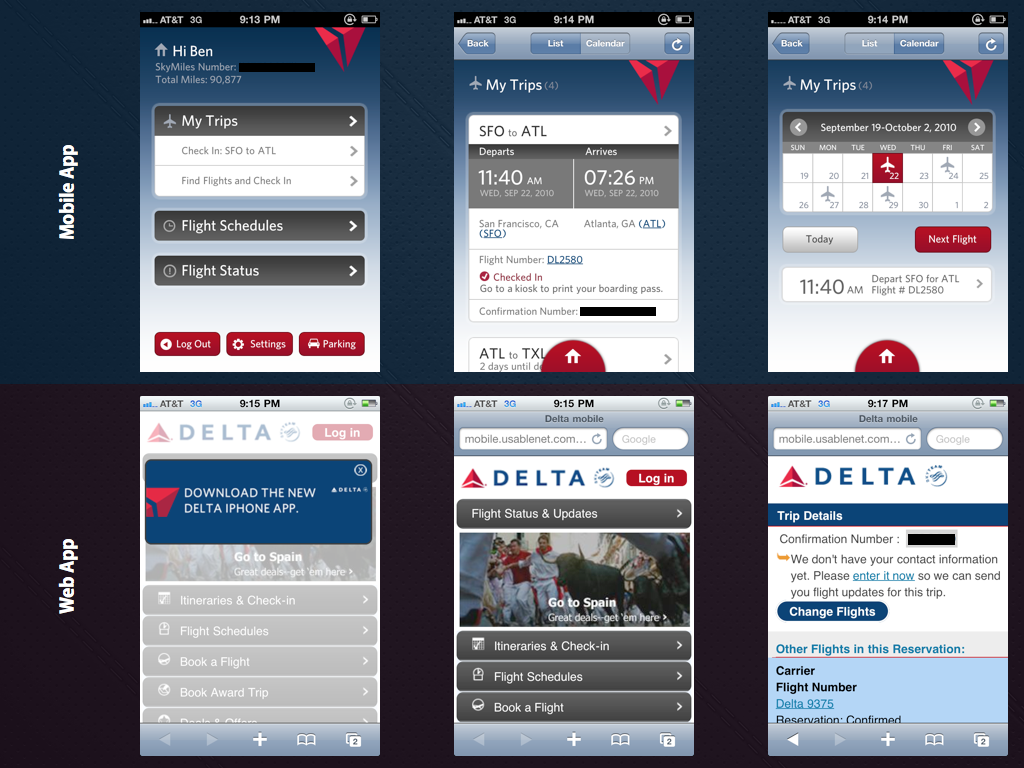

In our Palm Developer Day keynote we shared some of the high level pieces on how to put this all together, the major piece being that you have to really re-think the way that you architect your applications (think: Gmail not server generated HTML, Backbone.js, and more).

Here are some of the slides:

aside: Dave Balmer goes into some depth in his Rockstar apps with HTML5 talks.

Of course, it isn’t all hunky dory. There are still edge cases on getting things performing just right using the Web on the various devices. You have to think “cross platform” again. It may have been nice to ignore that and hack on a native application for awhile. But, would you rather be porting between proprietary SDKs and languages all day long? Or re-use as much of your code as possible.

And, if for some valid reason you really DO need a bit of native for something, you can break out of jail and do just that. The escape chute is waiting for you.

We have a long way to go on giving developers better access to native capabilities and tools to make building the next generation of apps more of a breeze, but it is doable right now (another aside: webOS kinda proves that right now as the native apps ARE Web apps!)

I will finish with the interesting take from Venture Beat the other day on how

the iPhone app is the Flash homepage of 2010. They say:

In the late 1990s, it was common for companies to spend $50,000 to $150,000 for a Flash homepage that looked like a beautiful brochure. However, they soon learned that Flash was cumbersome, slow to load, expensive to build, and hard to update, and moved on to HTML. Now only specialized, high-end sites are Flash only.

The exact same thing has replayed itself on the iPhone. Companies have paid $50,000, $100,000, and more for an iPhone app. Now they have to keep the iPhone app in sync with their regular web site, and have to add additional native apps, each at a high price point, due to the hypergrowth of Android and newly viable platforms like Windows Phone 7