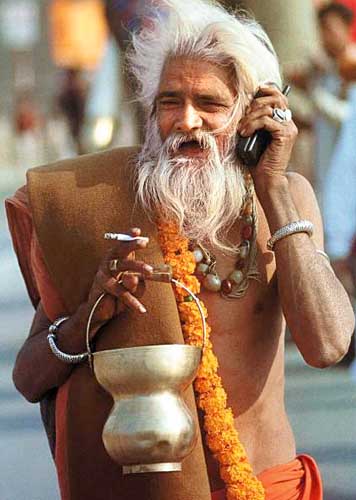

If information is truth, then are we going to be less prudish in the future?

I always enjoy it when Abe Fettig is in town, as we get some time to catch up. At one point in the conversation we were talking about how public everyone is these days. For example, this post will be archived in systems for eternity. If I say something rude about Abe, our great grandkids may know about it :)

As I think about the current political situation, and how prudish it all is … in the US at least, I wonder what the future lies.

What will happen when the presidential candidates are part of the Facebook generation and the paparazzi (at this point, every journalist it seems) will hardly have to dig hard to find some nude inhaling?

If the information is out there that everyone has skeletons of some kind (including the journalists too… back at ya) then maybe it won’t seem like such a big deal? Maybe we won’t expect our politicians to be Clark Kent? Then, if they aren’t pretending to look like Clark, they won’t fall as hard as Elliott “holier than thou, oops” boy.

Facebook now has the “groups” functionality, and I am curious how many people have taken the time to carefully pidgeon-hole their “friends” into these groups. For one, it is a lot harder to do so when you have a few hundred than if the functionality was in there from the start. It is like having to go through all of your photos and tagging them one by one. Everyone wants these groups, to separate the best buds from the work acquaintances, or just the cricket lovers from the ACDC fans. I am curious to see how people use it, or if we are at a point where the kids let it all hang out there and don’t care.

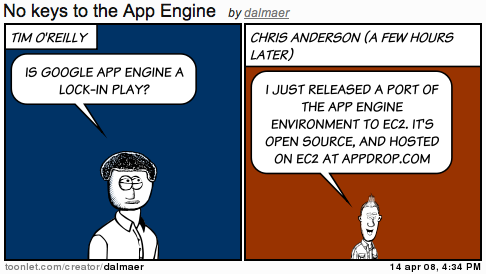

It is one thing to not care when you are a 16 yr old rocker-wanna-be, but when your grandkids can Google your information? Or you run for office? We’ll see how much people like:

But Daddy, it looks like you did a huge amount of ganja, so why can’t I smoke a bowl?